You Know AI. You Just Can't Prove It.

The Credentialing Paradox for Experienced Operators

In 1999, I was a Canadian teenager living in Brazil.

English was my first language. I'd grown up reading in it, thinking in it, dreaming in it. I'd been building things on computers since I could reach the keyboard — game mods, a chatbot in BASIC, an HTML site back when that meant something, a BBS I ran with my friends.

And yet, when I looked around at my peers in Brazil, they all had something I didn't: a certificate that said they knew English.

From a local ESL school. Twenty hours of instruction. A piece of paper.

Their English was functional at best. Mine was native. But in the context that mattered — a resume, a job application, a first impression — their capability was visible and mine wasn't.

I didn't know how to prove I spoke English. I just... spoke it.

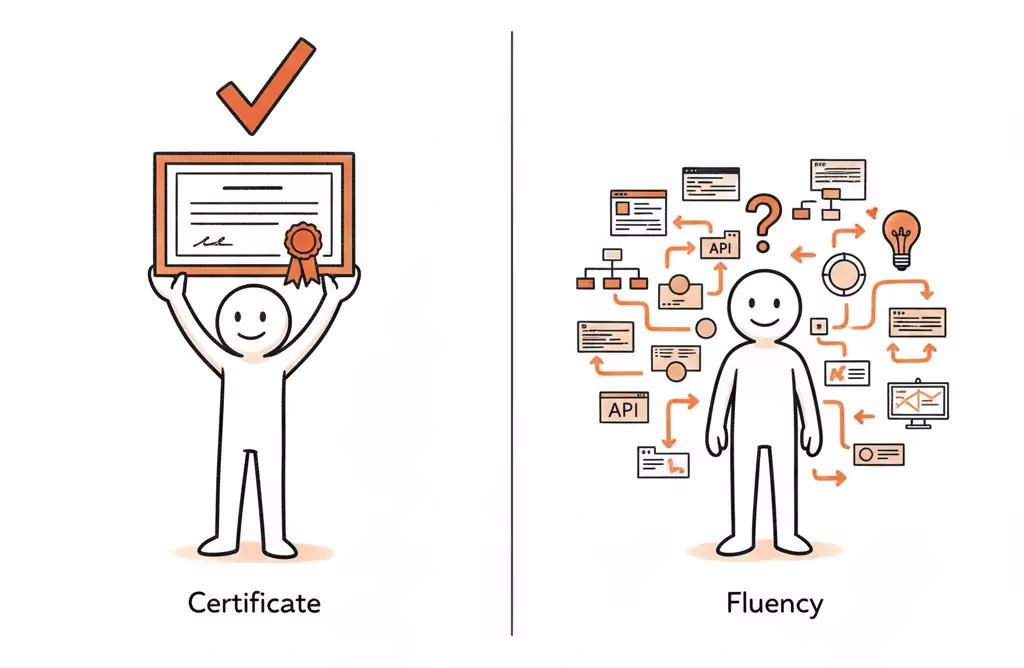

The core tension

The people with the deepest capability are often the worst at signaling it. The market rewards legible credentials. And right now, "AI literacy" is being defined by the people handing out the certificates — not the people doing the work.

The Certificate Economy

This pattern should feel familiar to anyone who's been in technology long enough.

In the early 2000s, knowing "how to use Word" was a resume line. People took courses for it. Got certificates. Meanwhile, the people who had been using computers since DOS didn't list anything — because what would they even write? "Has used computers for 15 years"?

The same thing happened with "social media expertise" around 2010. Then "data-driven decision making." Then "digital transformation."

Every time a new capability becomes important, the same cycle plays out:

1. A real skill emerges from practitioners doing the work.

2. The market notices it matters.

3. A credentialing industry forms around it.

4. The credential becomes the proxy for the skill.

5. The people who had the skill first are suddenly behind — because they never needed the credential.

We're at step 5 with AI.

What "Knowing AI" Actually Looks Like

I've spent over twenty years building things with data and technology. Regression models. Analytics frameworks. System architectures. Production applications with LLM API calls. Prompt engineering pipelines. Agentic workflows — scraping, data enhancement, ETL, analysis, summarization. Full system designs from data layer to output.

None of that comes with a badge.

You know what does come with a badge? A free four-hour course on Coursera.

And I want to be clear: I'm not disparaging anyone who takes those courses. Learning is learning. But there's something structurally broken when the person who completed an introductory course has a more legible AI credential than the person who's been building production systems for years.

"Include AI on your LinkedIn profile" is the new "include Microsoft Office on your resume." It sounds like advice. It's actually a symptom.

A symptom of a market that doesn't yet know how to evaluate what it's asking for.

The Legibility Problem

Here's what's actually happening beneath the surface.

When a hiring manager, a potential client, or even a LinkedIn algorithm encounters your profile, they're pattern-matching. They're scanning for signals. And the signals they've been trained to recognize are credentials, keywords, and badges — not demonstrated capability.

This creates a specific disadvantage for experienced operators.

If you've spent years building with data and AI, your actual work is probably:

Embedded in proprietary systems you can't show publicly

Described in terms that don't match trending keywords

Spread across so many domains that it doesn't fit neatly into "AI experience"

So integrated into how you think that you don't even recognize it as a distinct skill

That last one is the real killer. When something is native to you, you forget it's a skill at all.

Just like I forgot that speaking English was something I'd need to prove.

The Fluency Trap

There's an irony here that's worth naming.

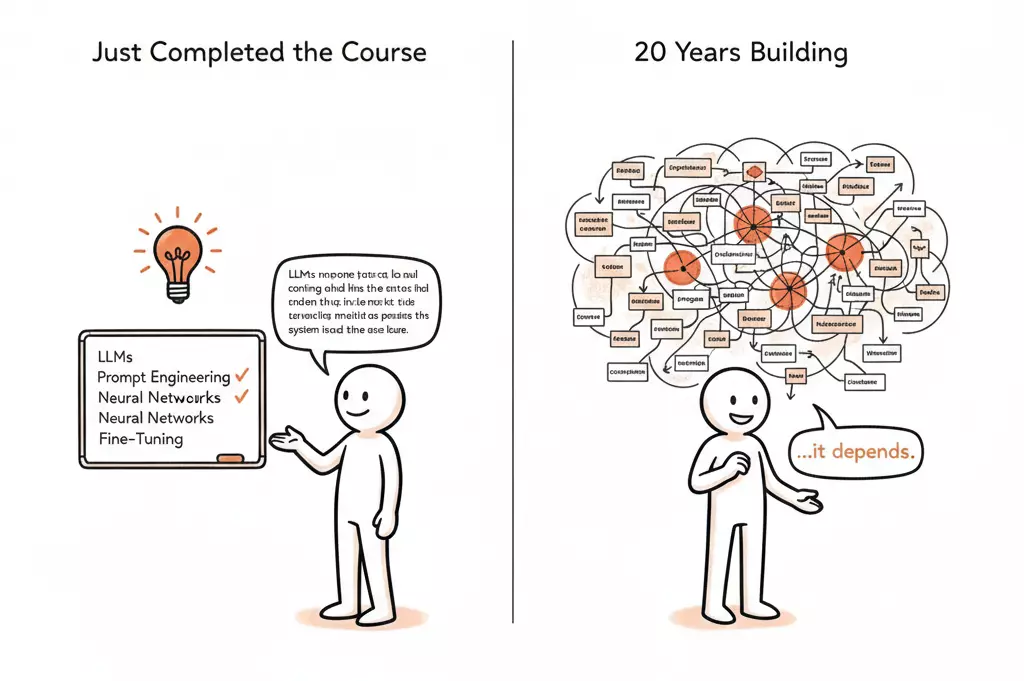

The more fluent you are in something, the harder it becomes to articulate what you know. Beginners can describe exactly what they learned — they just completed the module. Experts operate on intuition they've never had to decompose.

Ask a new AI course graduate what they learned, and they'll give you a structured answer. Concepts, frameworks, terminology — freshly organized.

Ask someone who's been building production AI systems for five years what they know about AI, and you'll probably get a pause. Then something like: "It depends on what you mean by AI."

That pause isn't ignorance. It's the weight of context. But it reads as uncertainty to someone scanning for confidence signals.

What This Means for Solo Consultants

If you're an independent operator — already wearing every hat, already drowning in context-switching — this credentialing gap creates a specific, practical problem.

You need to be visible in your market. You need clients and hiring managers to understand what you bring. And the market has decided that "AI capability" is something it wants to see.

But you can't just slap a badge on your profile and feel good about it. Because you know — from decades of experience — that the badge doesn't represent what you actually do.

It feels disingenuous. And for people who've built careers on substance over posturing, that discomfort is real.

You're not struggling because you lack capability. You're struggling because the capability you have doesn't fit the container the market is looking for.

Reframing the Problem

This is, at its core, a constraint problem. And like most constraint problems, the solution isn't to optimize inside the broken frame. It's to restructure it.

The broken frame says: prove you know AI by collecting credentials.

The restructured version says: make the work legible.

These are not the same thing. A credential says "I studied this." Legible work says "I built this, here's what it does, and here's the problem it solved."

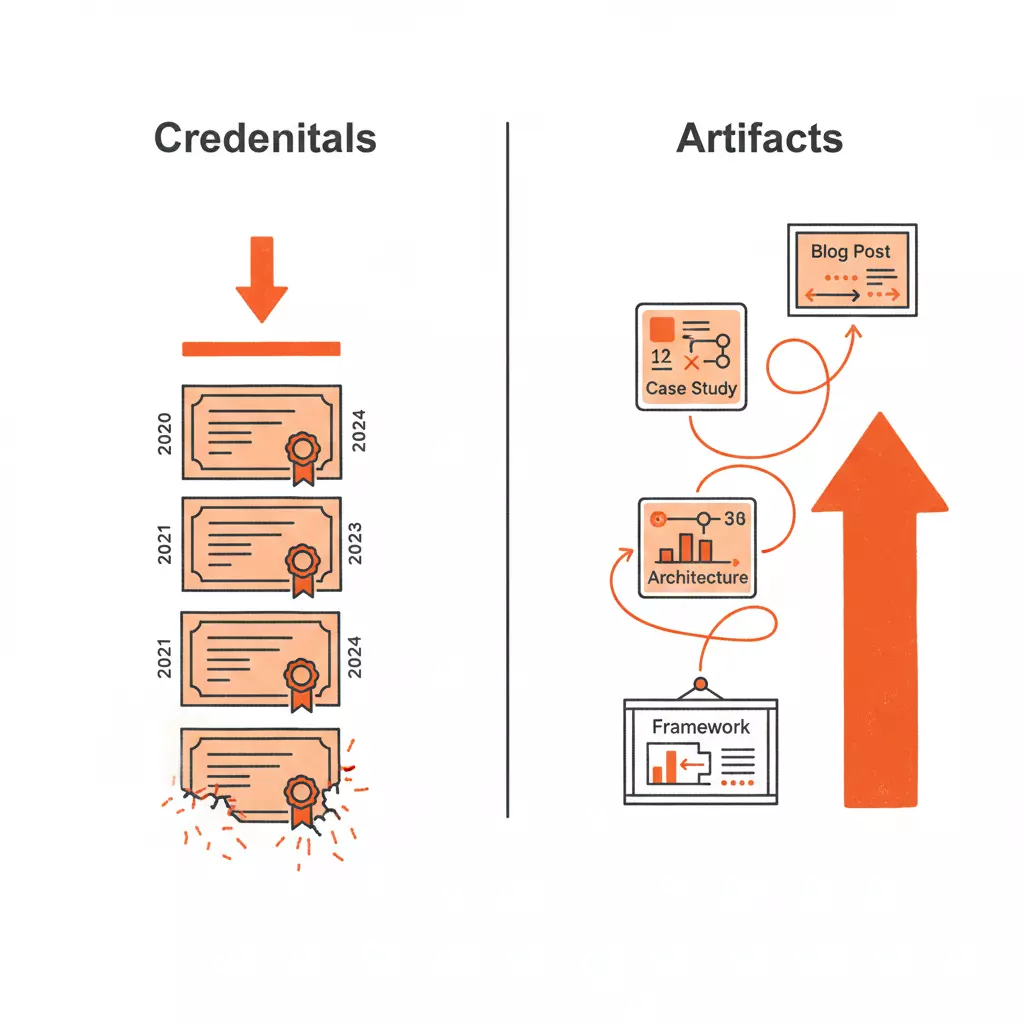

The Shift from Credential to Artifact

The experienced operators I've talked to who are cutting through this problem aren't collecting badges. They're producing artifacts.

Not portfolios in the traditional sense. But visible, tangible evidence of how they think and what they build:

What Legible Work Looks Like

Frameworks that show your thinking. Not "I completed an AI course" but "here's how I categorize AI tools based on what role they actually play in a business." The Workspaces, Connectors, Capabilities framework didn't come from a textbook. It came from watching dozens of consultants struggle with the same problem.

Case studies with structural insight. Not "I used ChatGPT for marketing" but "here's how I redesigned a data pipeline so that operational signals replaced survey data as the primary input for customer experience measurement." The specificity is the credential.

Systems you've designed, explained clearly. Walk someone through an architecture decision. Explain why you chose agentic scraping over manual collection. Show the before and after of a workflow you rebuilt. The explanation is the proof.

This is harder than collecting badges. It requires you to decompose expertise that you've internalized, translate it into language that non-experts can follow, and publish it somewhere visible.

But it's also more durable. Badges expire or become irrelevant. A well-articulated framework or case study compounds. It becomes a reference point. It signals depth in a way that no certificate can.

The 1999 Problem, Solved Differently

Looking back at Brazil, the thing that eventually made my English fluency legible wasn't a certificate. It was the work itself. Writing. Speaking. Building things in English that other people could see and use.

Nobody asked for the certificate once the output was visible.

That's the same shift experienced operators need to make with AI. Stop trying to prove you know AI. Start making the knowledge visible through what you produce.

This doesn't mean the credentials are worthless. For someone early in their career, a course is a legitimate starting point. It gives structure, vocabulary, a foundation.

But for the person who's been building production systems, designing data architectures, and shipping real solutions for years? The course isn't the answer. The answer is making the work you've already done — and the thinking behind it — legible to people who don't yet know how to evaluate what you bring.

The Uncomfortable Part

Here's what nobody wants to admit: this is a communication problem, not a capability problem.

And for many experienced operators, communication — specifically, self-promotion — is the hat they're least comfortable wearing.

You can design a system. You can architect a pipeline. You can see patterns in data that other people miss. But writing a LinkedIn post that says "here's what I actually know about AI" without feeling like you're performing?

That's a different kind of hard.

It's the same tension from the solo consultant problem: you know what good looks like. You just can't do everything at once. And the thing you're best at — the actual work — is often the thing that's least visible.

The credential economy thrives on that gap. It monetizes the space between what you can do and what you can prove. And it will keep thriving as long as experienced operators stay silent about what they actually know.

The paradox: The people most qualified to define what AI fluency actually means are the least likely to participate in the conversation about it. And the conversation is being shaped without them.

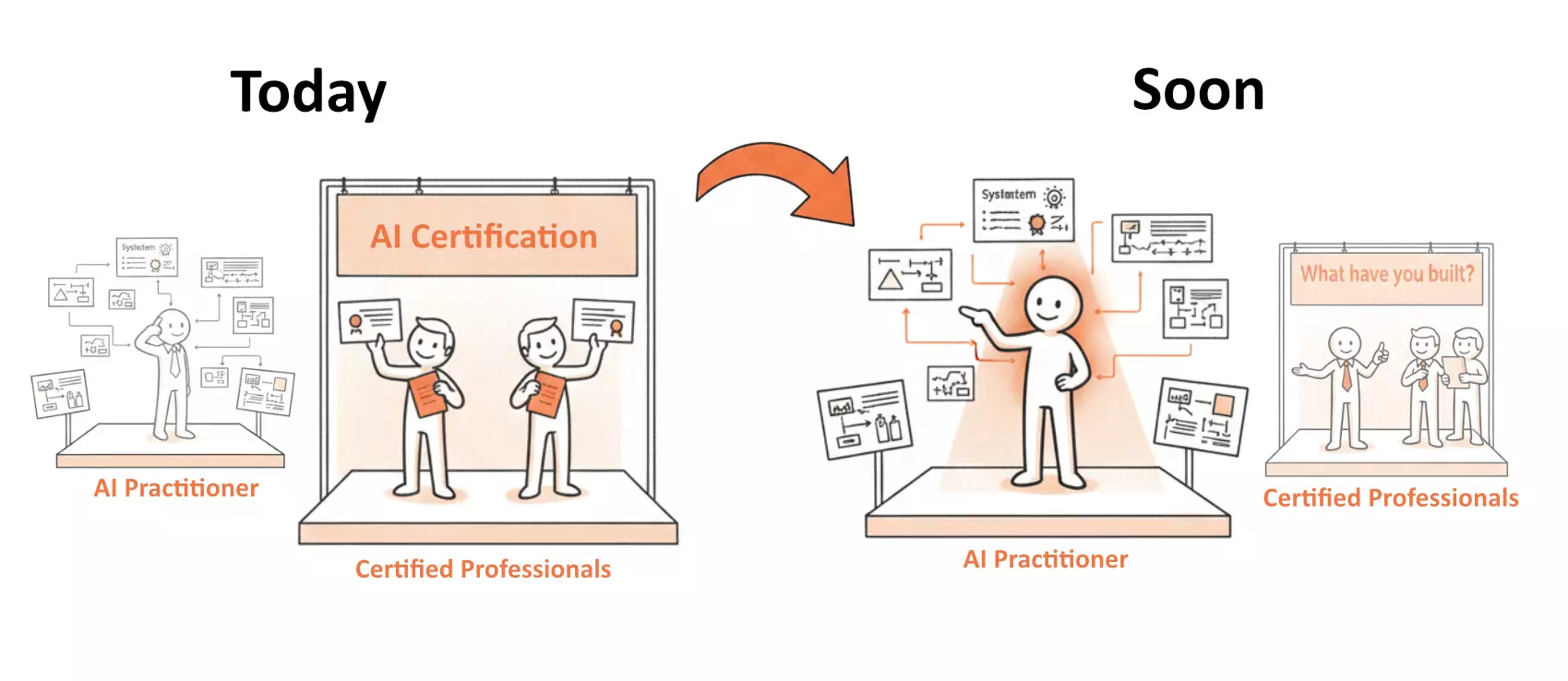

What Changes

I don't think the credential economy is going away. It serves a purpose — especially for people entering a field for the first time.

But I do think the market will eventually catch up. The same way "Microsoft Office proficiency" stopped being a resume line once everyone had it, "AI literacy" will stop being a differentiator once the floor rises.

When that happens — and it's already starting — the market will shift from asking "do you know AI?" to asking "what have you built with it?"

And the people who spent those years building, designing, and shipping will finally have the advantage their experience always deserved.

The question is whether you'll be visible when that shift happens. Or whether you'll still be the native speaker in a room full of certificates, waiting for someone to notice.

What's the thing you've built that you've never figured out how to put on a resume?

The system, the framework, the solution that's too embedded in context to fit into a credential. I'd genuinely like to hear about it.

— Raf Alencar