The Process Owns the Requirement

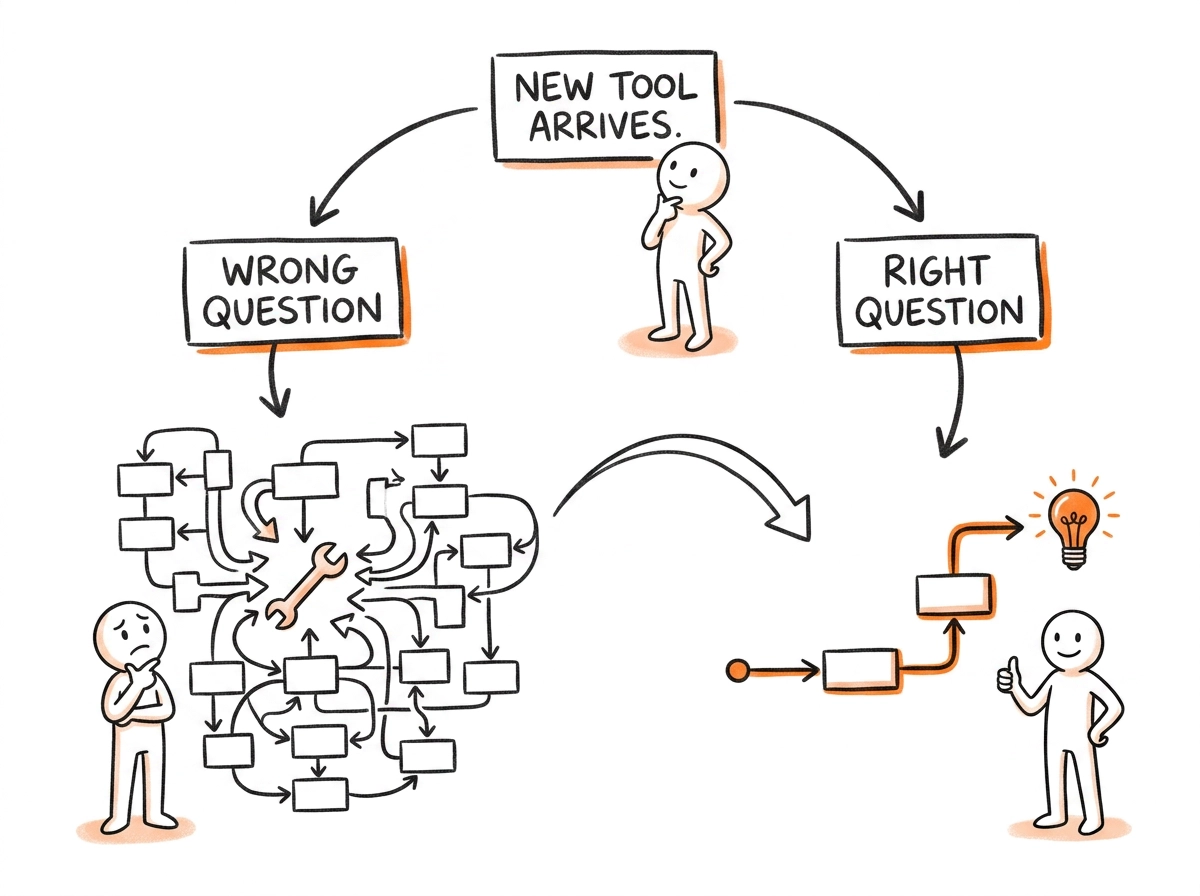

Most organizations ask the wrong question before deploying a new tool. Here's what they should be asking instead.

← Previous: Coordinated Dysfunction — your teams aren't misaligned. They're optimizing for different environments.

→ Next: Is Your HR Team Running Your People Strategy — Or Just Taking Notes?

The core argument

Every failed AI implementation has the same upstream error: the organization asked how to make its current process work with a new tool, instead of asking what the process actually needs to accomplish. The tool is always the last decision. The process owns the requirement. When you reverse that sequence, you don't just get bad AI adoption — you get your existing dysfunction running faster.

There's a question almost every organization asks before a technology deployment.

It sounds reasonable. It sounds practical. It's the question that gets asked in the vendor evaluation meeting, in the pilot planning session, in the steering committee kickoff. And it virtually guarantees a disappointing result.

The question is: how do we make our current process work with this new tool?

It sounds like the right question. It frames the technology as serving the business. It respects the existing process. It feels responsible — we're not blowing everything up, we're integrating thoughtfully.

But it starts from the wrong end.

It assumes the current process is correct — that the process requirements are fixed and the tool's job is to slot in around them. That assumption is rarely examined. And when it's wrong, every subsequent decision compounds the error.

What Happens When You Ask the Wrong Question.

The wrong question produces a specific and predictable outcome. The tool gets integrated into the existing process at the point of least resistance. A step gets added to the flowchart — "consult AI," "run through the model," "check with the system." The people doing the work adjust slightly. Some time gets saved on specific tasks. The process structure remains intact.

This gets called transformation.

It isn't. What it is, more precisely, is a hotline. You added a resource to an existing process. The process still requires someone to remember the resource exists, know what question to ask, interpret the response, and decide what to do with the answer. The accountability structure is unchanged. The approval layers are unchanged. The incentive environment is unchanged.

You gave the organization a better tool for executing the same flawed process at the same speed with slightly less friction in one specific place.

The dishwasher was not designed to be a better pair of hands. It was designed to make hands irrelevant to the problem.

Most AI implementations are designing a better pair of hands. They're optimizing the human motion rather than asking whether the human needs to be in the loop at all — and if so, exactly where, and for what.

The Lindy Trap.

There's a reason the wrong question is so persistent. It's not ignorance. It's a cognitive bias that operates on organizations the way it operates on individuals: the longer something has survived, the more legitimate it feels.

Your current process has been running for years. Maybe decades. It has been refined, documented, trained on. People have built careers around executing it well. It survived reorgs, system changes, leadership transitions, and previous waves of technology adoption. Its longevity feels like proof of its fitness.

But survival in the old environment doesn't mean optimality in the new one.

The process was designed around the constraints that existed when it was built — the tools available, the data accessible, the speed of information, the cost of computation, the skills required to execute. Most of those constraints have changed. Many have collapsed entirely. But the process didn't update because nobody questioned whether it should. It just kept running.

The test. Take any recurring process in your organization and ask: if you were designing this from scratch today — knowing what's now possible with AI, automation, and real-time data — would you design it this way?

If the honest answer is no, you're not looking at a process that needs a better tool. You're looking at a process that needs to be rebuilt from the requirement up.

The tool comes last. The requirement comes first.

The Right Question.

The right question is harder. It requires setting aside the existing process entirely — not permanently, but long enough to ask what the process actually needs to accomplish.

What outcome does this process exist to produce?

Not what does it do. What does it need to produce. The decision it needs to enable, the value it needs to generate, the problem it needs to solve. That's the requirement. The process is just the current solution to that requirement. And like most current solutions, it was designed for a different era with different constraints.

Once you have the requirement, the next question is: given everything available today — humans, AI agents, automation, real-time data, any combination — what would be the most effective way to produce that outcome?

That question produces something different from the wrong question. It doesn't assume the existing process structure is correct. It doesn't start from the current step sequence and ask where to insert the new tool. It starts from the outcome and builds back to the process.

How do we make our current process work with this new tool? Starts from the process. Assumes the structure is correct. Inserts the tool at the point of least resistance. Produces incremental improvement at best — dysfunction at speed at worst.

What does this process need to accomplish — and given everything available today, what's the best way to accomplish it? Starts from the requirement. Questions the structure. Builds the process around the outcome. Produces genuine redesign.

What Genuine Redesign Actually Looks Like.

When you start from the requirement, three things become possible that aren't possible when you start from the existing process.

Some steps get eliminated entirely. Not automated — eliminated. They existed because information was slow, capability was scarce, or approval was required for things that no longer require approval. When you ask what the outcome actually needs, many of the intermediate steps reveal themselves as coordination overhead from a different era. They don't need to be done faster. They need to stop being done.

Accountability shifts upstream. When AI takes execution, the human role doesn't disappear — it moves. Instead of executing the steps, humans become responsible for defining the parameters, setting the thresholds, deciding what the system should optimize for, and intervening when something falls outside the expected range. That's a more valuable role. It requires more judgment and less time. But it only becomes possible when the process is redesigned to accommodate it — not when a chatbot is added to the existing process.

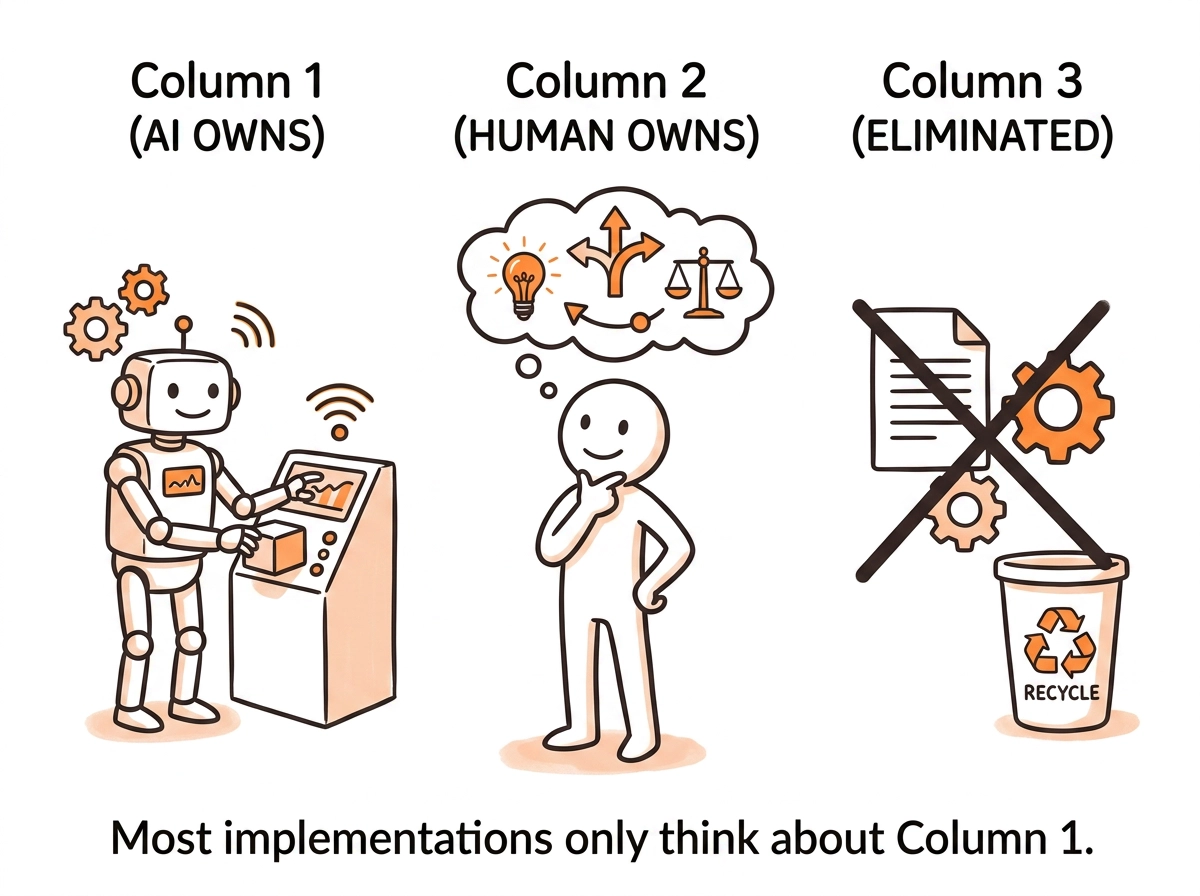

The right actor gets assigned to the right task. The question isn't "where can AI help?" — that question leaves the existing task assignment intact and adds AI as an optional resource. The right question is: what should AI own entirely, what should stay human, and what should be eliminated? Those are three different categories. Most implementations only think about the first.

The Incentive Problem That Undermines Redesign.

Here's what makes this hard in practice — and it has nothing to do with technology.

Genuine process redesign threatens existing accountability structures. When a step gets eliminated, someone who owned that step loses a function. When accountability shifts upstream, someone who was accountable for execution is now accountable for something harder to measure. When AI takes ownership of a task category, someone who was valued for executing those tasks has to find their value elsewhere.

None of this is fatal. But all of it is uncomfortable. And in most organizations, the people with the authority to approve a genuine process redesign are the same people whose authority is partly derived from the existing process structure.

This is why the wrong question persists even when leaders understand it's wrong. The right question is organizationally threatening. The wrong question lets everyone keep their function while appearing to embrace the new capability. It's not stupidity. It's rational self-preservation inside an environment that was never designed to reward genuine redesign.

Which means the process redesign problem is actually an environment design problem. The question isn't just what does this process need to accomplish. The question is: have we designed the incentive environment so that genuine redesign is the rewarded behavior — or does our environment select for the appearance of change over its substance?

Most organizations, if they answer honestly, discover the latter.

The Sequence That Works.

For organizations that have decided to get this right, the sequence matters as much as the questions.

Start with the outcome. What does this process need to produce? Name it in terms of decisions enabled, value generated, or problems solved — not in terms of reports generated, steps completed, or approvals processed.

Map the requirement. Given the outcome, what information, judgment, and action does producing it actually require? Not what the current process requires — what the outcome requires. Those are usually very different lists.

Assign actors to requirements. For each requirement, ask: what type of actor is best suited to this? AI for execution at scale, data retrieval, pattern recognition, and consistent application of defined rules. Humans for judgment under ambiguity, exception handling, relationship-dependent decisions, and anything that requires genuine accountability. Eliminate everything that serves neither the outcome nor a genuine actor requirement.

Design the environment to support the redesigned process. This is the step most implementations skip entirely — and it's why the redesigned process reverts to the old one within six months. If the incentive environment still rewards the behaviors that built the old process, the new process will be gamed back toward familiarity. Redesigning the process without redesigning the environment that surrounds it is renovation work inside a building that's going to be demolished.

Then select the tools.

The tools are the last decision. Not because they're unimportant — they matter enormously. But because every tool decision made before the process is redesigned is a tool decision made to serve the wrong process.

The organizations seeing transformational results from AI aren't the ones that found better tools. They're the ones that asked a better question before selecting any tools at all.

What does this process need to accomplish? Given everything available today — what's the right way to accomplish it?

That question, asked honestly, produces a different process. A different process, designed intentionally, produces a different outcome. And that's the gap between AI implementations that compound and AI implementations that stall.

There's a process in your organization right now that's waiting for this question.

Not the one that got the AI pilot. The one everyone knows is broken but nobody has redesigned because the environment never made redesign the obvious move. That's the one worth starting with.

The next piece in this series is about the function that should own the environment design question — and why it almost never does. It isn't HR. It isn't Finance. It isn't Strategy. It's the intersection of all three, with a mandate none of them currently hold.

— Raf Alencar